Softplus¶

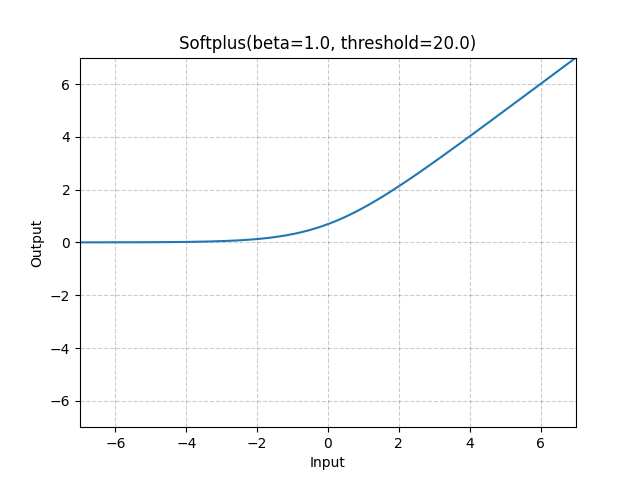

- class torch.nn.Softplus(beta=1.0, threshold=20.0)[source]¶

Applies the Softplus function element-wise.

\[\text{Softplus}(x) = \frac{1}{\beta} * \log(1 + \exp(\beta * x)) \]SoftPlus is a smooth approximation to the ReLU function and can be used to constrain the output of a machine to always be positive.

For numerical stability the implementation reverts to the linear function when \(input \times \beta > threshold\).

- Parameters:

- Shape:

Input: \((*)\), where \(*\) means any number of dimensions.

Output: \((*)\), same shape as the input.

Examples:

>>> m = nn.Softplus() >>> input = torch.randn(2) >>> output = m(input)