LeakyReLU¶

- class torch.nn.LeakyReLU(negative_slope=0.01, inplace=False)[source]¶

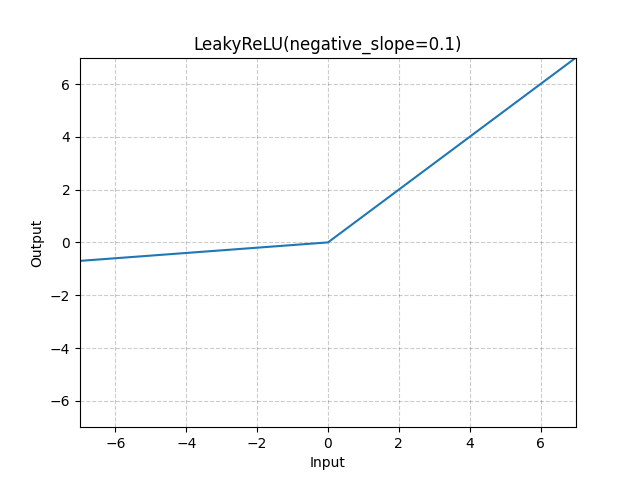

Applies the LeakyReLU function element-wise.

\[\text{LeakyReLU}(x) = \max(0, x) + \text{negative\_slope} * \min(0, x) \]or

\[\text{LeakyReLU}(x) = \begin{cases} x, & \text{ if } x \geq 0 \\ \text{negative\_slope} \times x, & \text{ otherwise } \end{cases} \]- Parameters:

- Shape:

Input: \((*)\) where * means, any number of additional dimensions

Output: \((*)\), same shape as the input

Examples:

>>> m = nn.LeakyReLU(0.1) >>> input = torch.randn(2) >>> output = m(input)